Research Overview

My research develops trustworthy deep learning frameworks - with a strong focus on uncertainty quantification and multi-modal fusion - to ensure that artificial intelligence deployed in high-stakes healthcare environments is accurate, explainable, and inherently safe.

Trustworthy Multimodal Fusion for Cancer Survival Analysis

Description: Pioneering uncertainty-aware AI architectures that integrate heterogeneous medical data to deliver reliable, interpretable patient survival predictions.

Challenge: Clinical survival analysis relies on highly diverse, multimodal data (such as whole-slide images, clinical records, and genomics). However, this data is often noisy, conflicting, and right-censored. Traditional deep learning models struggle to fuse these heterogeneous sources effectively while providing the transparency and uncertainty quantification that clinicians require to trust the predictions.

Approach: Developing evidential multimodal fusion frameworks, such as EsurvFusion and DPsurv, grounded in belief function theory and epistemic random fuzzy sets. These models explicitly quantify both data and model uncertainty, utilizing reliability discounting and dual-prototype fusion to seamlessly and safely integrate complex data streams.

Key Findings: The developed models establish new state-of-the-art benchmarks in predictive accuracy and interpretability for time-to-event prediction. By actively learning the reliability of different medical modalities and quantifying uncertainty, these frameworks prevent overconfidence in noisy data and provide clear, clinically actionable insights.

Related Publication: EsurvFusion: An evidential multimodal survival fusion model based on Epistemic random fuzzy sets (IEEE Transactions on Fuzzy Systems, 2025) | DPsurv: Dual-Prototype Evidential Fusion for Uncertainty-Aware and Interpretable Whole-Slide Image Survival Prediction (arXiv, 2025)

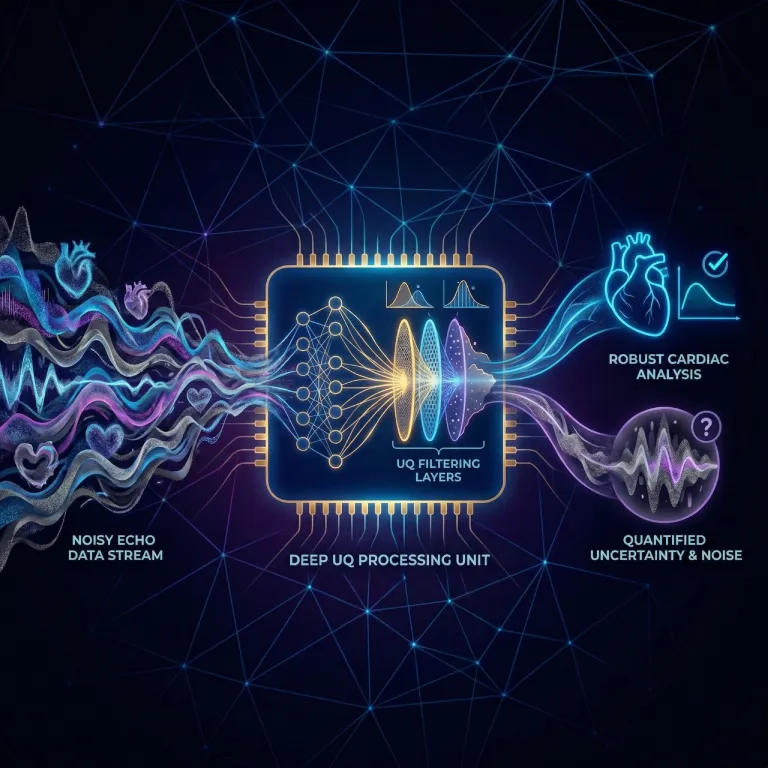

Uncertainty Quantification in Medical Image Analysis

Description: Developing deep evidential learning frameworks and belief function theories to quantify uncertainty and improve reliability in medical image segmentation.

Challenge: Medical images, such as MRI and PET scans, are frequently degraded by noise, artifacts, or ambiguous tissue boundaries. Standard deep learning models output deterministic segmentation masks without indicating their confidence levels. In critical clinical scenarios, these "black box" models can produce highly confident but incorrect predictions, risking patient safety.

Approach: Integrating belief function theory with deep neural networks to create "deep evidential networks." This approach utilizes mechanisms like contextual discounting to intelligently manage conflicting multi-modality data, alongside semi-supervised learning to extract maximum value from limited expert-annotated datasets.

Key Findings: By modeling "masses of belief," these frameworks achieve high segmentation accuracy while simultaneously generating spatial uncertainty heatmaps. The AI actively learns to discount unreliable imaging contexts and can explicitly declare "ignorance" when data is insufficient, directly flagging high-risk areas for clinical review.

Related Publication: A review of uncertainty quantification in medical image analysis: probabilistic and non-probabilistic methods (Medical Image Analysis, 2024) | Deep evidential fusion with uncertainty quantification and reliability learning for multimodal medical image segmentation (Information Fusion, 2024)

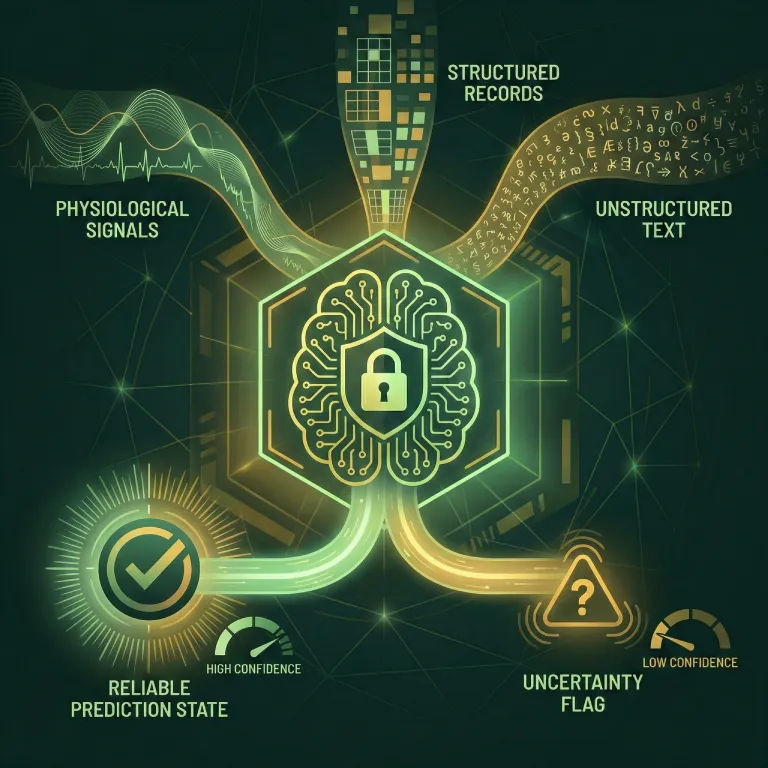

Multimodal Learning for Clinical Decision Support Systems

Description: Integrating structured Electronic Health Records (EHR) and free-text clinical notes using advanced multimodal and foundation models to build reliable predictive systems for patient care.

Challenge: Modern hospital data is incredibly diverse, encompassing both structured clinical measurements (like heart rate or lab results) and unstructured free-text (such as doctor notes or discharge summaries). Traditional AI models struggle to process these vastly different modalities simultaneously, often missing critical nuances hidden in natural language that are essential for accurate ICU outcome or chronic disease prediction.

Approach: Leveraging multimodal foundation models and belief function theory to seamlessly fuse structured EHRs with unstructured clinical texts. By applying domain adaptation and evidential reasoning, these frameworks align different data streams while maintaining a strict measure of diagnostic reliability.

Key Findings: The developed multimodal frameworks significantly improve the accuracy of critical tasks, such as predicting intensive care unit (ICU) outcomes and chronic disease progression. Furthermore, by rigorously evaluating clinical foundation models, this research provides a roadmap for achieving "universal intelligence" in healthcare without compromising clinical safety.

Related Publication: Towards accurate and reliable ICU outcome prediction: a multimodal learning framework based on belief function theory using structured EHRs and free-text notes (Journal of Healthcare Informatics Research, 2025) | Has multimodal learning delivered universal intelligence in healthcare? A comprehensive survey (Information Fusion, 2024)